Correlation Is Not Causation, Illustrated With Cat Food

Morgan Voss·

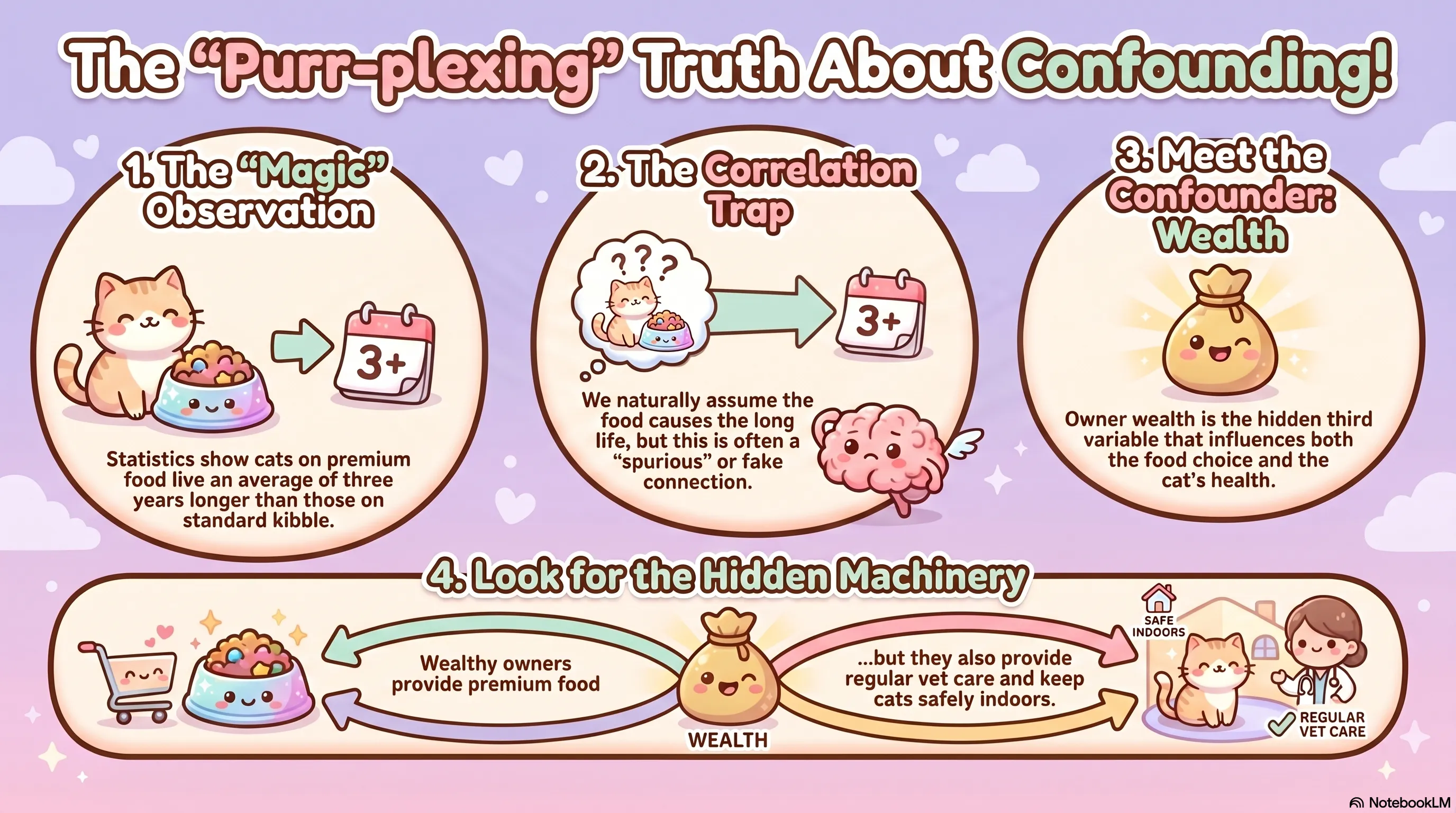

Studies on pet nutrition are frequently misread (with the best intentions). Consider a straightforward one: cats fed premium food live, on average, three years longer than cats on standard kibble. The natural conclusion is that the food is responsible. It probably isn't.

The correlation/causation problem is older than the internet's enthusiasm for it. The interesting part isn't the slogan. It's the machinery that produces the illusion, and the tools available for dismantling it.

Confounding

The reason the food correlation is probably spurious comes down to a third variable: owner wealth.

Wealthy owners are more likely to buy premium food. They are also more likely to schedule regular preventive veterinary care, keep their cats indoors, and manage chronic conditions early. Longevity follows wealth through several independent paths. The food is just the most visible one.

This is a confounder: a variable that influences both the treatment (food type) and the outcome (longevity) simultaneously. When a confounder is present and unaccounted for, the observed correlation is not the causal effect. It's a statistical shadow of the confounder.

The firefighter example makes the structure plain. Data consistently shows that more firefighters at a fire correlates with greater property damage. Fire size drives both the response and the destruction. Firefighters don't cause damage; large fires cause both the firefighters and the damage.

Causal Directed Acyclic Graphs

A confounder is easier to reason about once it's drawn. A Causal DAG (Directed Acyclic Graph) maps the assumed causal relationships between variables using arrows. For the cat food study:

Wealth → Premium Food

Wealth → Longevity

Premium Food --?→ Longevity

The question mark is the relationship we're trying to isolate. Wealth is upstream of both variables, creating a back-door path: an indirect route from food to longevity that runs through wealth rather than through any biological mechanism of the food itself.

The back-door path needs to be closed before the data tells us anything about causation.

Conditioning

Closing the back-door path means holding wealth constant when making the comparison. In probability notation, this is the difference between:

and

The first asks what the probability of longevity is given food type, across all cats. It includes the confounding effect of wealth. The second asks whether, among cats whose owners have the same wealth level, food type still matters.

If the longevity gap disappears when wealth is held constant, the food was not doing the work. The correlation existed because wealthy owners were buying both the food and the outcomes.

This adjustment is called conditioning on the confounder, and it requires that you have actually measured the confounding variable. If it isn't in your dataset, you cannot condition on it. You can suspect the confounding, acknowledge it, and decline to make causal claims. That is the appropriate response in that situation.

Collider Bias

Confounding isn't the only way correlation misleads. Sometimes the distortion runs in the opposite direction, introduced not by an omitted variable but by being too selective about which data enters the analysis at all.

Consider the observation that attractive celebrities tend to be less talented, and talented celebrities tend to be less attractive. This sounds plausible. It is an artifact of selection, not a real trade-off.

Fame acts as a collider: to become a celebrity, a person generally needs to be talented, attractive, or both. People who are neither don't appear in the pool. Among those who did make it through that filter, talent and attractiveness become negatively correlated, because either quality alone was sufficient for entry.

In the DAG:

Talent → Fame ← Attractiveness

Fame is downstream of both variables. Conditioning on it (by restricting analysis to celebrities) opens a spurious negative correlation between talent and attractiveness that doesn't exist in the general population. This is Berkson's Paradox.

It appears in any analysis where the sample is filtered on a variable that shares causes with the things being compared. Hospital studies are a classic site: two conditions may appear negatively correlated within the admitted patient population simply because either one was sufficient for admission. The population has already been culled, and the analysis doesn't account for it.

The gap between "these two things move together" and "this thing causes that thing" is not small. Closing it requires specifying the causal structure of the system, measuring the right variables, and being honest about what remains unobserved. The slogan is easy. The work is not.

The cats don't charge. The site doesn't either. If something here helped a concept click, a small tip is appreciated.

Buy the Cats a TreatNo PayPal account needed.