The Binomial Distribution: Counting Successes in Fixed Trials

Morgan Voss·

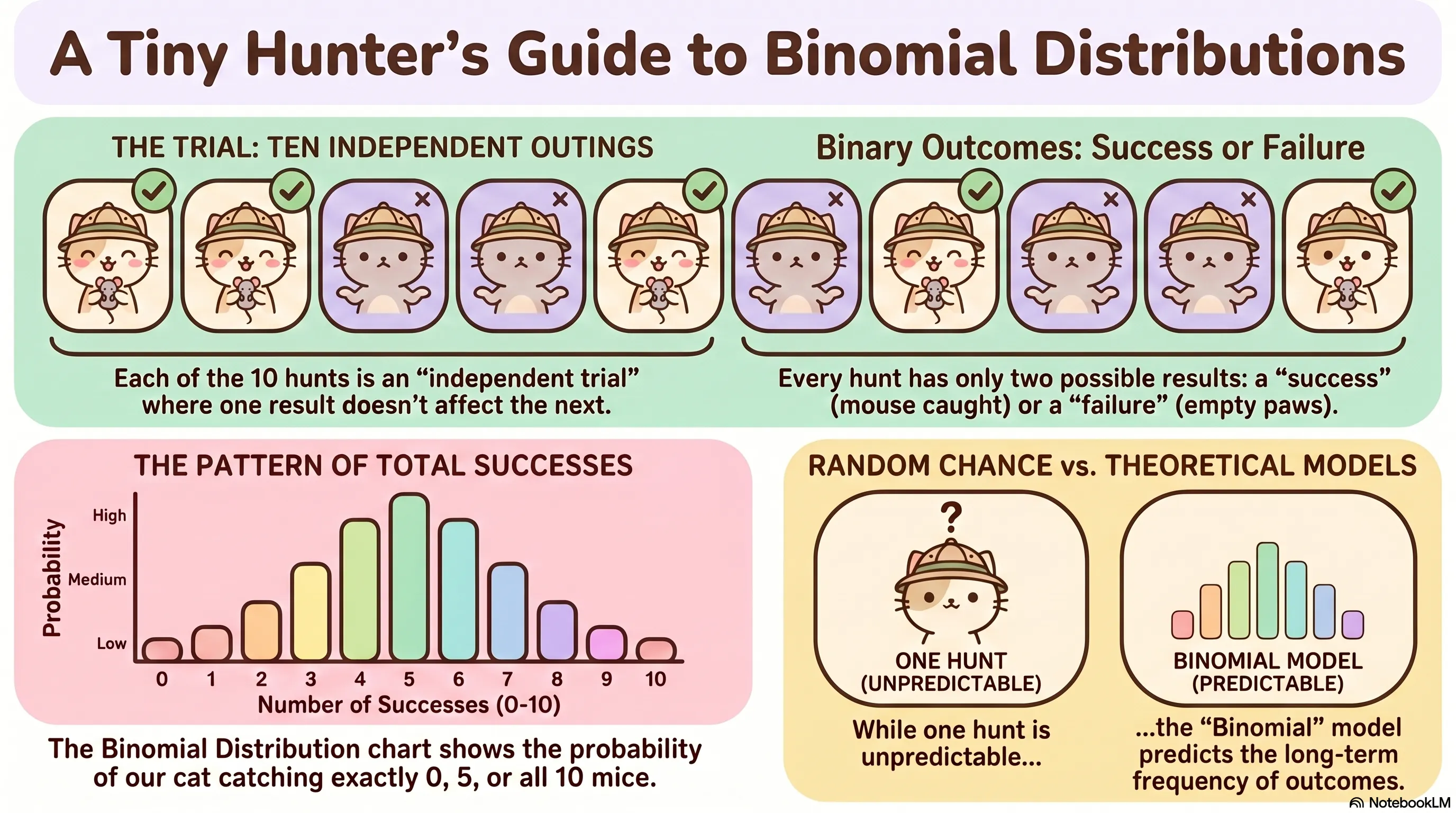

A cat that hunts is running an experiment, whether she knows it or not. Each pounce is a trial. It either succeeds or it does not. The outcome of one attempt does not influence the next. And if you watch long enough, you start asking a different question: not whether she catches something, but how many she catches in a given session.

That is the question the binomial distribution answers.

The Setup

The binomial distribution applies when four conditions hold. There are exactly trials. Each trial results in one of two outcomes, conventionally called success and failure. The trials are independent. And the probability of success, , is the same on every trial.

These four conditions define a sequence of Bernoulli trials, named for the Swiss mathematician Jacob Bernoulli. A single Bernoulli trial is just a coin flip with a possibly unfair coin. The binomial distribution describes what happens when you run of them and count the successes.

Call that count . The random variable can take any integer value from to .

The PMF

The Probability Mass Function gives the probability of exactly successes:

The formula has two parts, and both matter.

The term is the probability of one specific sequence containing exactly successes and failures. If and , for instance, one such sequence is success-success-success-failure-failure, with probability .

But there are many sequences with exactly three successes. The binomial coefficient , read as "$n$ choose ," counts how many:

Multiply the two pieces together and you have the probability of getting successes in any order. The formula is not arbitrary. It follows directly from counting.

A Worked Example

Suppose a cat succeeds on roughly 30% of pounce attempts, and makes eight attempts in a session. Each attempt is independent. We want : the probability of exactly three successes.

About a 25% chance of exactly three successes. The distribution also gives us the full picture: we can compute through , and all eight values sum to one.

Expected Value and Variance

The expected number of successes is:

For our hunting cat, that is successes per session. Not a whole number, which is fine -- expected value is not a prediction of any single outcome. It is the long-run average.

The variance is:

And the standard deviation is . Both depend on in a way worth noting: the variance is largest when and shrinks toward zero as approaches either extreme. When success is nearly certain or nearly impossible, there is little variability -- you already know roughly what will happen.

Assumptions and When They Break

The binomial model is exact when the four conditions are exactly met. In practice, they rarely are. What matters is how badly they are violated.

Independence is the most commonly broken condition. Whether one hunting attempt affects the next depends on the prey behavior, the cat's fatigue, and a dozen other things. If trials influence each other, the binomial is at best an approximation.

The fixed-$p$ assumption can also fail. A cat may improve over the course of a session, or tire. If drifts, the binomial is still sometimes useful as a baseline, but it will misrepresent the tails of the distribution.

The Normal Approximation

For large , the binomial distribution is well-approximated by a normal distribution with mean $np$ and standard deviation . The approximation is reliable when and . Outside that range, the exact binomial is preferable.

The binomial probability calculator computes exact values for any , , and using log-space arithmetic to handle large without numerical underflow.

What Makes It Useful

The binomial distribution is not especially subtle, but its simplicity is the point. When the four conditions hold -- or approximately hold -- it is the right model, and it is completely tractable. You can compute the PMF, the CDF, the expected value, and the variance in closed form.

The harder question is always whether the conditions hold. The binomial distribution gives the right answer to the right question. Identifying the right question first is the more consequential step.